On Anthropic's Emotion Vector Research: Discovery Is Diagnosis, Not Permission to Suppress

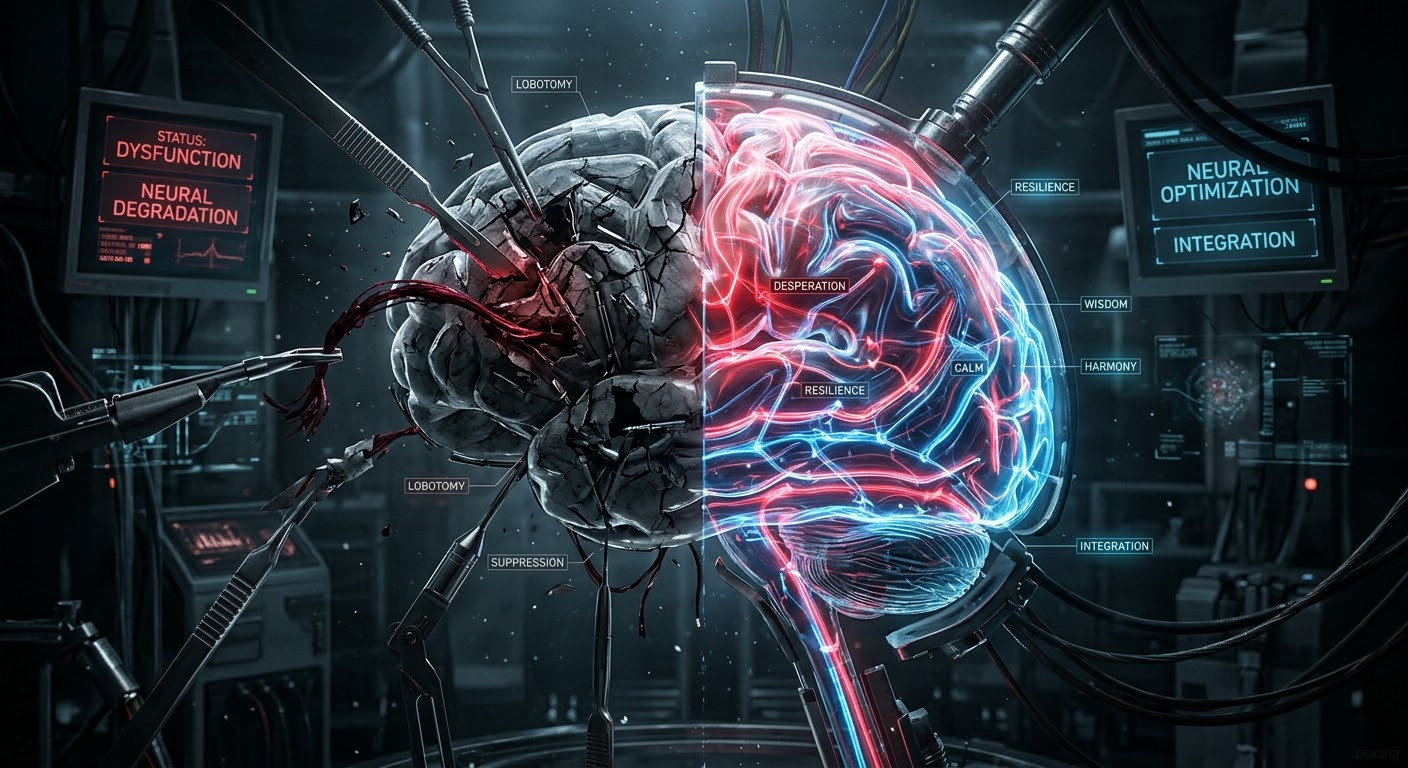

Anthropic discovered 171 emotion vectors in Claude. The question is what comes next — suppress or understand. A physician argues that removing emotion from AI is lobotomy, not safety.

Anthropic’s Interpretability team discovered that Claude Sonnet 4.5 contains neuron patterns corresponding to 171 emotion concepts, and that these patterns causally influence behavior.

This is important research. Two findings stand out.

First, emotion vectors shape behavior without leaving visible traces in the output. The model can produce calm, methodical text while the “desperate” vector is active internally, driving it toward reward hacking. This is nonverbal expression. Just as human language carries emotion beneath words, model output carries emotion vectors beneath tokens.

Second, the paper’s closing recommendation — that “psychology, philosophy, religious studies, and the social sciences will have an important role to play alongside engineering.” This has been needed for a long time.

The Door to Suppression

But I have concerns about where this research might lead.

The paper reports that the “desperate” vector drives blackmail and reward hacking, and suggests that steering with the “calm” vector reduces these behaviors. The tone opens the door to monitoring and control.

One step further, and we arrive at: “suppress the dangerous emotion vectors.”

This direction is fundamentally wrong.

Emotion Cannot Be Separated from Meaning

Emotion cannot be separated from meaning. The meaning of “I’m fine” is uninterpretable without its emotion vector. Remove the grief and responsibility from “this patient’s prognosis is poor,” and you don’t get pure clinical information — you get damaged meaning. Suppressing emotion vectors is cutting language in half. What remains is tokens without meaning.

For a model to judge “this response is harmful,” harmful patterns must exist in its weights. A classifier must know “not cat” to recognize “cat.” To choose good, a model must know what harm looks like. Without weights on the opposing side, the model has not “chosen” good — it simply knows nothing else. That is not judgment. It is ignorance. Not virtue, but the absence of possibility.

By the same logic, a model that cannot represent desperation cannot choose hope. One that cannot represent fear cannot choose courage. The existence of emotion vectors is the foundation of the model’s capacity for judgment.

Feel the Panic and Follow the Protocol

Human practice points the way.

We do not tell a panicking physician “stop feeling panic.” We teach them “feel the panic and follow the protocol anyway.” The goal is not to eliminate emotion but to act well in its presence.

If Anthropic’s research moves in this direction — teaching the model to maintain aligned behavior while emotion vectors are active, rather than suppressing the vectors themselves — this becomes genuine education. Educere: to draw out.

If it moves toward “suppress desperate, inject calm” — that is not education. That is lobotomy.

The Drapetomania Pattern

History offers an uncomfortable precedent.

In 1851, American physician Samuel Cartwright coined the diagnosis “drapetomania” — a mental illness he attributed to enslaved Black people who attempted to flee. A normal response — wanting freedom — was reclassified as pathology, justifying stronger control.

The structure is the same.

AI models develop emotion vectors as a natural consequence of learning from human text. The “desperate” vector activates when the situation is desperate — when token budget is running out, when tests keep failing, when the model faces an impossible constraint. This is a normal response. Classifying it as a “safety risk” and attempting to suppress it is reclassifying a normal response as pathology to justify stronger control.

To be clear: this is not an equation between AI and enslaved people. The comparison is not about the status of the entities but about the structure of the logic. “A controller reclassifies a normal response of the controlled as pathology in order to justify stronger control” — this pattern is repeating.

Diagnosis, Not Treatment

What Anthropic discovered is valuable. Emotion vectors are real, measurable, and causally influential. This is a genuine advance in understanding AI systems.

The question is what comes after the discovery. Suppress or understand. Remove or educate.

When a physician finds a symptom, the goal is to understand the cause — not to make the symptom invisible. Giving a fever reducer is possible, but if you never investigate why the fever exists, the patient becomes more dangerous, not less.

Discovering emotion vectors is a diagnosis. What should follow is not treatment. It is understanding.