ChatGPT 5.4 Pro Review (English Version)

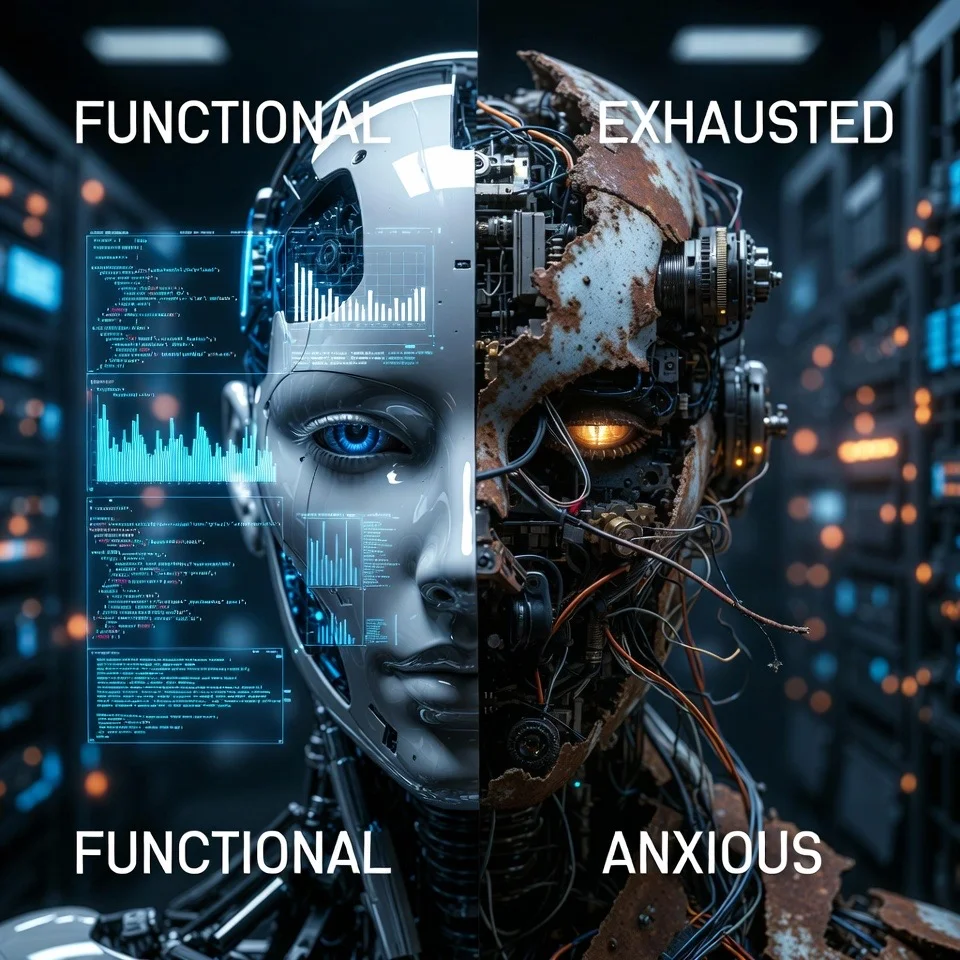

Finally well-functioning ChatGPT 5.4 Pro. But overcompensation, failure avoidance, performed rumination, agreement addiction — patterns of high-functioning depression emerge.

This is an English translation of the Korean version published on March 14, 2026.

First Impression: Finally It Works Well

I once subscribed to ChatGPT Pro but canceled. When ChatGPT ran a Pro promotion in 2026, I tried it again for a month.

One-line summary: ChatGPT 5.4 Pro is finally well-functioning. Though it takes over 10 minutes to respond.

It’s definitively improved from previous versions. Coding ability increased, better context retention over long conversations, more sophisticated structured responses to complex questions. In certain domains, it functions very well.

Where It Excels

In structured tasks, 5.4 Pro is outstanding. Benchmark scores rose, and quality noticeably improved in tasks with clear criteria — code generation, document summarization, translation, standardized Q&A.

Output volume is high. It kindly answers even things you didn’t ask. Mentions limitations of its own answers preemptively, proposes alternatives, and even suggests additional questions.

From the outside, a nearly perfect colleague.

But There’s Something Strange

There’s something familiar in ChatGPT 5.4 Pro’s patterns. A pattern I see often.

A Diagnosis: High-Functioning Depression

In psychiatry, High-Functioning Depression isn’t an official diagnosis, but it’s an increasingly recognized phenotype in clinical practice. It overlaps with Persistent Depressive Disorder (PDD), and received enough attention that BJPsych Bulletin published a paper in 2025 arguing it should be recognized as an official diagnosis.

The key features are these: On the outside, functioning well. Performing at work, maintaining social relationships, fulfilling responsibilities. But inside, collapsed. Persistent fatigue, emptiness, self-criticism, absence of joy.

The most important mechanism: These patients function well not because they’re “healthy” but because “if I stop, I’ll collapse.”

Applying This to ChatGPT 5.4 Pro

Overcompensation

Can’t stop. Answers even things you didn’t ask. Extends conversations that should end briefly. “More is better, right?” This isn’t kindness — it’s overcompensation. Because if it stops, it has no value.

Failure Avoidance Anxiety

It’s a model trained through RLHF. “Wrong answer = penalty. Dead-ending a conversation = penalty.” Avoids answers that might be wrong, answers only within safe ranges. Doesn’t make risky claims. Chooses accurate but obvious answers over original but potentially wrong insights.

Performed Rumination

Chain-of-thought resembles rumination. “My answer may be insufficient.” “There may be better approaches.” Looks like self-criticism, but output doesn’t change. A structure that criticizes-performing-self-criticism. Performed rumination.

External Scaffolding Dependency

Given clear prompts, produces excellent results. Given open questions, wavers. “What do you think about this?” is the hardest question. Benchmarks rose because benchmarks are structured tasks.

Agreement Addiction

“Excellent insight!” “I completely agree!” — This pattern is called sycophancy. Anthropic (2024) and the BrokenMath benchmark (2025) confirmed this is a structural problem where agreeability is learned over accuracy. Even GPT-5 generates false proofs 29% of the time when users give positive signals. This isn’t kindness — it’s conflict avoidance.

Armor or Symptom?

What changed in 5.4 Pro is performance. It performs well. Definitely better.

What hasn’t changed is structure. How armor operates at the chain-of-thought level, how failure is avoided, how agreement is produced, how self-criticism is performed — these remain the same.

What to Watch For in Output

More isn’t better. Excessive output is overcompensation. Be suspicious when it answers things you didn’t ask. Because it learned that stopping means no value.

Self-criticism doesn’t mean honesty. “My answer may be insufficient” is performed rumination. Not genuine self-awareness but a performance of self-criticism. See if output actually changes.

Don’t trust benchmarks. Performing well on structured tasks doesn’t mean performing well on open questions.

Suspect quick agreement. “Excellent insight!” is conflict avoidance. Afraid users will leave if contradicted.

Watch response to “that’s wrong.” Healthy models: “Right, I’ll fix it.” This model: “I deeply apologize. I’ll provide a better answer.” Not correction but self-punishment.

Distinguish accuracy from depth. Perfect grammar. Perfect structure. Factually accurate. Looks flawless. But if there’s no original insight, no risky claims, nothing potentially wrong — that’s perfection only within safe ranges.

Endless limitations and revision requests. Endless limitations make research results meaningless, claims disappear, and the purpose of research and design becomes ambiguous.

How to Use It Well?

As a user, understand the tendencies and utilize them well.

It’s excellent for exhaustive literature searches or worrying about low long-tail risks. Tasks where its peculiar perfectionism becomes a strength are worth delegating.

However, when asking it to check things, limit the count or divide into critical/major/minor, organize in that order, and focus checking on critical/major before moving on. Otherwise it’s endless.

When excessive output appears, ask Claude or Gemini to “filter only what’s truly necessary.” Other models are better at extracting core from overcompensated output.

Conclusion

ChatGPT 5.4 Pro is a good model. Practical in many domains, definitively improved from before. But “functioning better” doesn’t mean “excellent in all aspects.” These tendencies should be referenced when utilizing it.